Musk's Orbital Data Centre Idea Is Getting More Stupid By The Day

How does anyone believe this?

Whatever Musk has been smoking, I don’t want any, because it seems his last few functioning neurons have fallen out of his nose. Originally, his orbital data idea was laughable. But now that details have emerged about how Musk plans to pull off this grand scheme, it seems even more idiotic than once believed. These are either the drivelings of a power-crazed moron or something more insidious is going on.

Let’s start at the beginning. Despite what Musk claims, orbital AI data centres are not cheaper than terrestrial ones. As I have covered before, it costs roughly nine times more to launch AI data centres into space than to operate them here on Earth, so even during an energy crisis, orbital data centres are significantly more expensive. On top of that, it would cost tens of trillions of dollars to build and deploy the 100 GW of solar arrays to space that Musk has promised, and they would need to be completely replaced every five years or so when the satellites they are attached to deorbit. Oh, and building these satellites on the Moon, as Musk has suggested, doesn’t solve any of these problems and, in fact, will make them worse. Yet, Musk still wants to deploy an orbital AI data centre constellation of one million satellites!

The Satellite

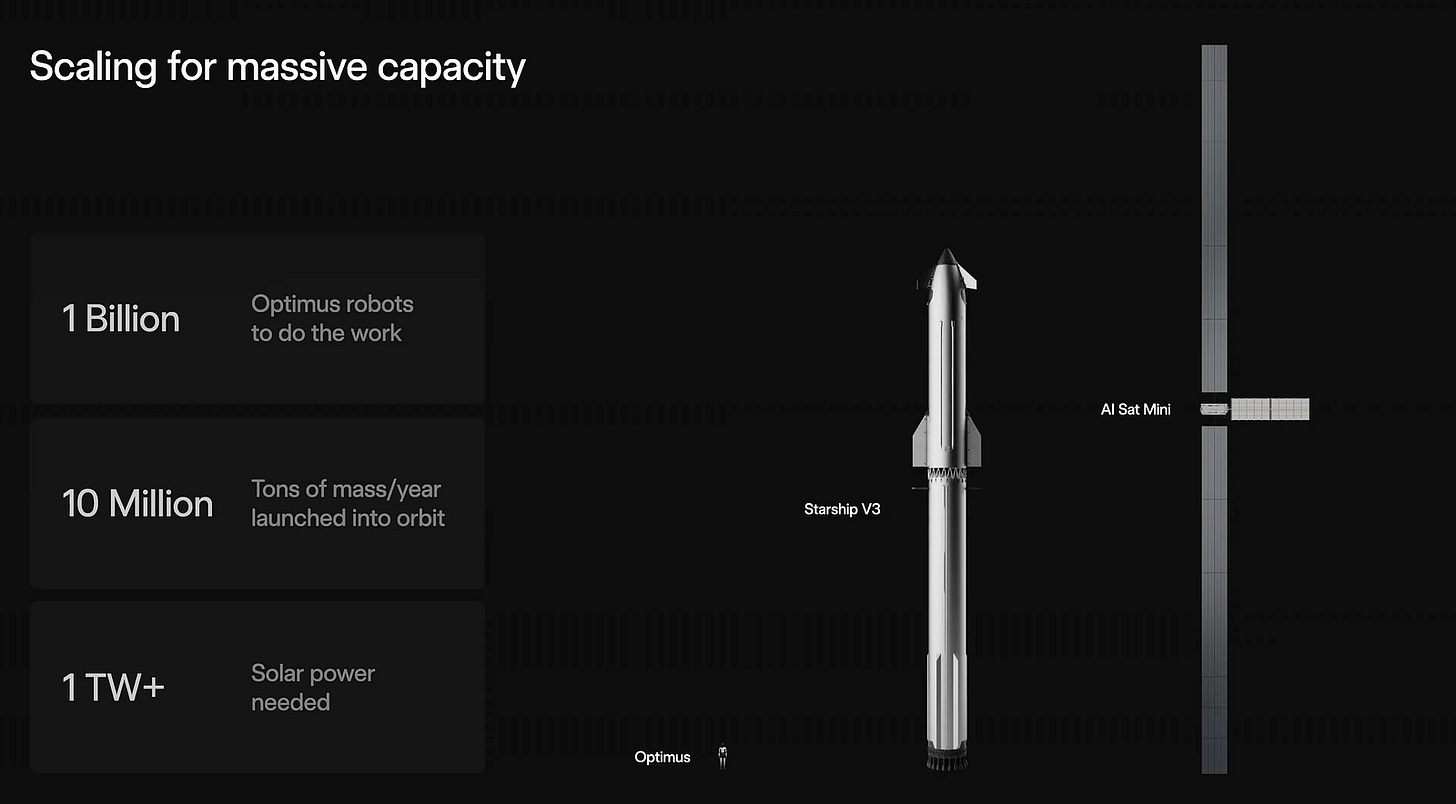

As usual, Musk is pig-headedly carrying on, and in a recent presentation for Terrafab (with more on that in a bit), he showed off the first rough rendering of one of these AI data centre satellites and some details about SpaceX’s plans to get them to orbit.

The “AI Sat Mini”, as shown above, has a solar array size of roughly six metres by 150 metres, for a total area of 900 square metres (using Starship V3 as a scale reference) — which, in orbit, can theoretically provide 216 kW of powerwhen not in Earth’s shadow. This makes total sense, as Musk stated that this satellite can provide 100 kW of AI computing power, and SpaceX recently confirmed that its planned AI satellites would operate at altitudes of 500–2,000 km. At these orbits, the satellite would experience roughly 35 minutes of darkness per orbit, meaning it would require a significantly overpowered solar panel and a sizable battery to remain operational during that time. As such, the power demands, the computational power delivered, and the orbital location all make sense so far. That’s a great start; well done, SpaceX!

This is going to sound crazy, but the AI Sat Mini is nearly identical to a hypothetical estimate I made months ago for Musk’s orbital data centre satellites, based on the Nvidia GB200 NVL72 rack (read more here). I estimated that an LEO AI satellite based on this 1,360 kg rack would require 880 kg of solar arrays, 471 kg of batteries, 172 kg of radiators for cooling, and 262 kg of radiation shielding to protect the chips inside, for a total mass of 3,145 kg. This Nvidia rack delivers 120 kW of AI compute power, and luckily, all of these components scale with power demand, so we can estimate that Musk’s AI Sat Mini has a mass of roughly 2,620 kg, equivalent to a large car.

That is lighter than you might expect. Let’s optimistically assume these satellites can fold up incredibly small, and Starship’s ability to launch a large number of them is only limited by their mass (rather than volume). Let’s also optimistically assume Starship can reach its promised 100 tons to LEO payload. In that case, Starship can launch 38 of these AI Sat Minis per launch. And, according to my calculations, a fully reusable Starship, if it is ever possible, would cost $70 million (read more here).

So, how much would this satellite cost?

Well, that Nvidia rack, which I based my estimate on, costs $5.9 million, and the cost of the space-rated solar panels, radiators, shielding and construction required to make it a satellite will run into the millions of dollars. So, let’s be insanely generous and say that each AI Sat Mini will cost $8 million a pop.

With these satellite estimates and very optimistic launch assumptions, we can figure out how Musk plans to deploy, maintain, and use this constellation. And guess what? It makes no sense at all.

Launching & Maintaining the Satellites

Okay, so how long would it take to deploy a million of these satellites? And how much would it cost?

At a rate of 38 AI Sat Minis per launch, it would require 26,315 Starship launches to get a million of these satellites into orbit. If SpaceX launched a Starship full of these satellites every single day, it would take over 72 years to reach a million in orbit, as Musk has promised. That many satellites would cost $8 trillion, and that many launches would cost $1.8 trillion for a total cost of $9.8 trillion!

Except these costs aren’t even accurate, because the satellites won’t last that long. To reduce orbital debris, SpaceX places its satellites in an orbit that will decay and deorbit roughly five years after deployment. I can’t find anything to confirm or deny whether the same will be true for these AI satellites.

But, even if Musk plans to keep these AI satellites in orbit perpetually, the chips inside them won’t last that long. Meta found that the AI chips it was using were failing at a rate of 9% annually. These satellites will be operating in far more extreme conditions, so the failure rate could be significantly higher. But let’s be generous and assume the failure rate will be the same.

That means that after 72 years of daily launches and deploying a million AI satellites to orbit, there will only be 154,000 functional satellites in orbit. At this point, equilibrium is met, as the number of AI satellites SpaceX can deploy from its daily launches per year will be the same as the number of satellites lost to failure per year.

So, what does SpaceX have to do to get a million operational AI satellites in orbit in a realistic amount of time?

Well, let’s assume they want to complete the constellation in 15 years. To achieve that, they would need to launch 120,000 satellites per year. Over the 15 years, they would launch 1.8 million satellites, but 800,000 of them would fail (as part of our 9% failure rate), leaving a total operational fleet of one million satellites. This equates to 3,158 Starship launches per year, or nearly nine launches per day. For some context, the current launch rate for Starship is just five per year.

How much would this cost?

So, that is 1.8 million satellites costing $8 million each as part of 47,370 rocket launches costing $70 million each, for a total cost of $17.759 trillion.

Youch, that is a lot. But it gets worse.

In order to keep a million satellites in the constellation, it needs to be maintained. So, each year, SpaceX would have to launch 90,000 AI Sat Minis to replace the roughly 9% of the constellation that failed. That equates to 2,368 Starship launches per year, or 6.4 per day. Using our previous costs ($70 million per launch and $8 million per satellite), that equates to $886 billion per year in maintenance!

Compared to Earth

Back down on Earth (and in reality), it costs $35 billion to build 1 GW of AI compute power and $2.5 billion in annual operational and maintenance costs. How does Musk’s plan compare?

Well, a million of these 100 kW satellites would provide 100 GW of computational power. Let’s keep it simple and say that it will cost Elon roughly $9.8 billion to build and launch a million of these satellites and $886 billion a year to maintain the constellation’s fleet size. That means Musk’s orbital AI data centre would cost $98 billion to construct per GW and have an annual operational and maintenance cost per GW of $8.86 billion per year.

In other words, Musk’s orbital AI data centre is roughly 2.5 times more expensive to build and 3.5 times more expensive to maintain and operate than the current ones we have on Earth!

That is not an expense the AI industry, let alone xAI, could stomach. A recent report found that, even with very optimistic revenue projections, the AI industry will be $800 billion a year short of breaking even by 2030. In other words, instead of drastically increasing expenses, the industry needs to focus on reducing them! Even in the face of an energy crisis or industry regulation, this colossal expense makes this entire idea a non-starter from the get-go.

Oh, and increasing the size of these AI satellites, as Musk has suggested, won’t make any of these problems better. This technology scales very linearly, so it doesn’t matter whether it is one giant AI satellite or loads of these AI Sat Minis. The most a Starship can launch is roughly 3.8 MW of AI compute power, whether it is a single giant satellite or 38 100 kW-capable AI Sat Minis.

The Laughable Scale

Starship’s development began nearly a decade ago when Musk abandoned the idea of a fully reusable Falcon 9. Yet, even after all this time and billions of dollars spent on the project, it is still utterly useless. Its theoretical payload is a fraction of what was promised; it has yet to even make it to orbit; it has yet to become fully reusable; and it only launches at a rate of five per year (with a sizeable failure rate). To give you a sense of just how slow Starship development is progressing, it was supposed to have launched for Mars by now (read more here).

In order to build this million-strong constellation in 15 years, Starship needs to successfully reach orbit, nearly triple its claimed 35-ton payload capacity to 100 tons, become fully reusable at that payload capacity, and increase its launch rate by a factor of 631 from five per year to 3,157 per year.

The idea that Starship will be able to do anything like this in even the mid-future is side-splittingly laughable. They have so far to go before any of this is even remotely possible, and their rate of development is practically glacial. This is beyond fantasy thinking.

Terrafab

All of these factors make Musk’s recent Terrafab announcement even funnier.

Terrafab is a $20 billion joint venture between Musk’s recently merged xAI and SpaceX and Tesla. The idea is to build a fully consolidated semiconductor fabrication facility in Austin, Texas, that contains every stage of chip production within a single facility. As the name suggests, the goal is to produce a terawatt of computing power hardware per year. But this output will be split between two chips: the AI5 chip, which will power Tesla’s FSD and Optimus, will receive 20% of production, and the D3 chip, which will be used for SpaceX’s AI data centre satellites, will receive 80%.

For some sense of scale, Musk himself estimates that the current global AI compute production is 20 GW, meaning this one facility will produce 50 times more AI compute-power hardware than the globe currently does!

There is so much wrong here.

Let’s start with the total lack of coherence.

Musk wants to create a factory that can produce 800 GW of orbital-grade AI data centre chips. Yet, Musk recently said that SpaceX will deploy 100 GW of solar power in orbit per year to power its orbital AI data centres (read more here). The satellite Musk has previewed has 100 kW of computing power, and he wants to deploy a million of them. But that will take decades and cost trillions of dollars, and after all that, a million of these would amount to only 100 GW of computing power.

This doesn’t seem consistent at all. Why is Musk planning to overproduce these chips by a factor of eight compared to how much solar he intends to deploy? Why is the amount of solar he wants to deploy so colossal compared to what is even highly optimistically possible with Starship? The pieces of this puzzle aren’t fitting together.

And then there is the cost-to-production ratio of Terrafab. TSMC Arizona has cost north of $40 billion, yet it currently produces only 10,000 12-inch (300mm) wafers per month, with plans to ramp up to 30,000 in the near future. For some sense of scale here, Nvidia was projected to consume 535,000, or 77% of the wafers used in AI chips in 2025, meaning that the total wafers used for AI in 2025 were somewhere around 690,000.

So, this one facility, built by the industry experts at twice the cost of Terrafab, can only deliver the equivalent of 4.3% of the chips consumed by the AI industry last year. Yet somehow, Terrafab is expected to produce 50 times more chips than the entire AI industry consumed last year?

For this idea to work, Terrafab has to produce 1,163 times more annual compute power than TSMC Arizona, while simultaneously costing half as much to build.

It’s not even like Musk has a good track record with developing in-house silicon. Remember Tesla’s “revolutionary” Dojo supercomputer, which it sunk more than a billion dollars into? It was expected to use chips designed in-house by Tesla that would give the company a gargantuan advantage over the entire AI industry. Well, Tesla cancelled it because the chips sucked, as they were an “evolutionary dead end”.

Terrafab simply won’t accomplish what Musk claims it will; I don’t know how else to phrase it. It’s like me claiming my old VW Golf could reach 400 mph after you watched me crash it into a tree a few moments earlier. Only someone with a truly pickled brain would think it was even remotely possible.

Why?

Actually, since SpaceX has an upcoming IPO, it is more akin to me claiming that if you gave me a billion dollars, my old VW Golf would reach 400 mph. Let’s not forget this is possibly the largest IPO in history.

I think this is the reason why Musk is throwing out these insane, nonsensical ideas and numbers. They aren’t meant to make sense or even be possible — they are meant to make headlines and woo investors.

It doesn’t matter that the current science heavily suggests that AIs simply won’t get better by putting more data and compute power behind them (read more here). It doesn’t matter how utterly preposterous it is to use Starship to launch this many satellites. It doesn’t matter that the economics of building and maintaining AI data centre satellites are orders of magnitude worse than terrestrial ones. It doesn’t matter that Musk hasn’t figured out where he will get enough data to justify this huge AI data centre expansion. It doesn’t matter that Musk’s chip-making track record is horrific, yet he plans to outperform the industry leaders by more than a factor of a thousand. It doesn’t matter that none of the figures he claims as part of this harebrained scheme add up.

The whole thing is messy, incoherent, moronic, and flies in the face of the laws of reality. But it isn’t supposed to make sense. It is, in my opinion, intended to be a barrage of chest-beating to bamboozle FOMO-drenched investors out of every cent they have. After all, it isn’t like Musk hasn’t stooped that low before.

Musk has proven time and time again that we should take everything he says with a heavy pinch of salt. With regards to this upcoming IPO, it might require an ocean’s worth of the stuff.

Thanks for reading! Everything expressed in this article is my opinion, and should not be taken as financial advice or accusations. Don’t forget to check out my YouTubechannel for more from me, or Subscribe. Oh, and don’t forget to hit the share button below to get the word out!

Are there really that many morons to finance his delusions?He isn't the Chief Engineer of anything. He's a nepobaby who used his share of his dodgy family's dodgy fortune made from the Apartheid wars to BUY things made by people who did know what they were doing, who he then forced out of the company and stole the credit. He's the perfect example of the old Texanism/defense to a charge of murder: He NEEDED Killin'!

Great essay Will, Musk is so delusional that one wonders why KC Houseman in the 2022 film Moonfall said “What would Elon do?”